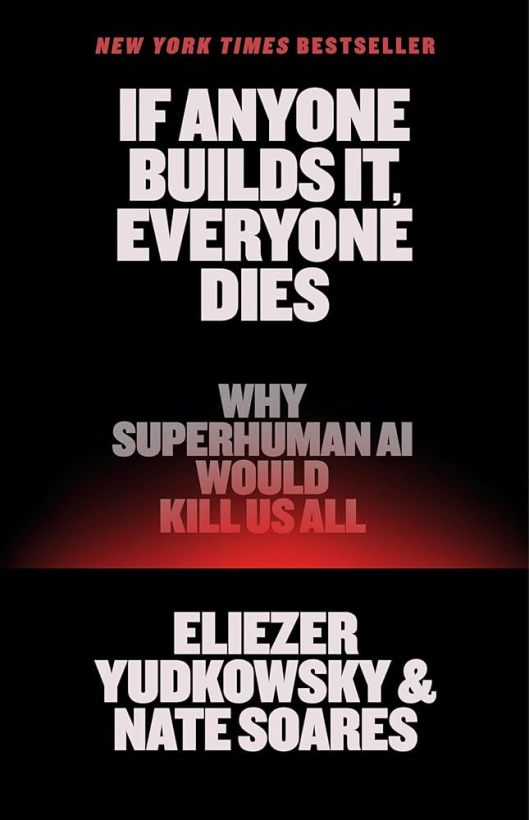

My previous non-fiction post I firmly put myself in the camp of the AI skeptics – I don’t think we are going anywhere near the AI singularity promised to us by Ray Kurzweil, Elon Musk, Sam Altman or any of the other imbeciles who have taken the reigns of industrialized scientific progress. But in my curiosity of the state of the thinking on the subject, I picked this book up. Eliezer Yudkowsky is a thinker who I seem to like in the abstract, and I want to keep going through his works. This seemed like a good start.

Intentionally and as is the case with so many non-fiction books, the title gives away the whole arguement. The two authors are convinced that AI research needs to stop because, should anyone successfully make it, we will all die. So to talk about this book, I do need to accept the presupposition that the book hinges on – that AI is a technology that can be constructed. I do think it is possible, I just do not think that we are currently on the right track for it, and my opposition to the technology as it stands has a lot more to do with the amount of money and enviornemnt we are currently burning to build this technology, and the societal impacts the fruits of this technology is bearing.

But that being said, if we engage the science-fiction part of our brain and assume that we make an AI and it starts thinking for itself, does Yudkowsky’s and Soares’s arguement about the risk hold water?

Yes. If Kurzweil gets his dream, we really are all fucked. There is not much of a reason why an AI should keep us around, and it absolutely would get rid of us solely because we are in its way. It also feels rather inevitable that we would be in its way, and at some point the elimination of humans would just become a need for it.

But that being said, I am still not terribly worried that this is going to be the outcome of what we are currently doing. The authors would tell me that I cannot say that with any kind of confidence, and I suppose that they are correct. I also do have to consider that things have happened in AI research centers that have spooked AI researchers, and the people in charge of this nascent technology are too stupid to be able to keep this technology sealed off. The question is not weather AI can outsmart you, the question is whether it can outsmart Ketamine addicted Elon Musk and the business minded decision of his board of directors.